Work Environment

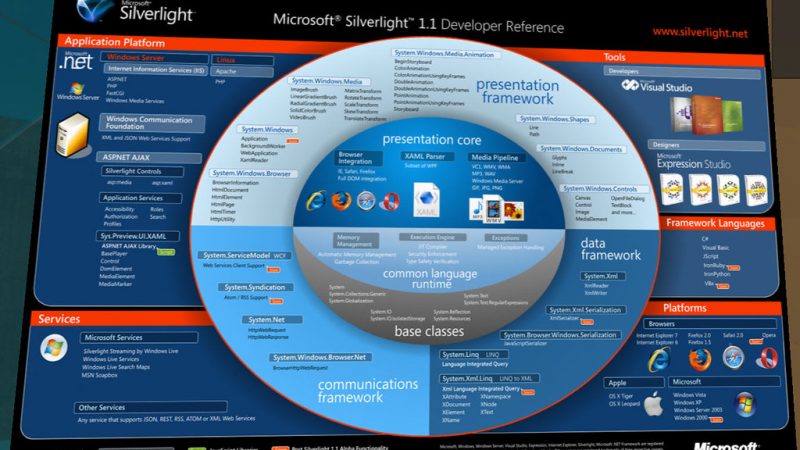

10 Things You Should Know About Microsoft’s Silverlight

- By Allen Jones

- 4 years ago

An…

BARDOX BOOMBA ENZYME How Alcohol Cause Hangover and How to Relieve It

- By Allen Jones

- 4 years ago

BARDOX…

8 HTML5 Tips and Tricks You Should Learn in 2020

- By Allen Jones

- 4 years ago

HTML5…

Parenting guide – Techniques to take care of your baby

- By Allen Jones

- 4 years ago

Children…

Long Reads

What’s New

Benefit of Essential Oil

Discover the myriad benefits of essential oils in this informative article. Backed by evidence-based research, we delve into how these natural extracts can improve sleep and relaxation, enhance mood and emotional well-being, boost the immune system, provide pain relief certificate course in aromatherapy, and benefit skincare and haircare routines.

Whether you’re seeking a holistic approach to wellness or looking to incorporate natural remedies into your daily routine, essential oils offer a versatile and effective solution.

Read on to unlock the potential of these powerful plant-based extracts.

Improved Sleep and Relaxation

Improved sleep and relaxation can be achieved through the use of essential oils Nila aromatherapy bar. Essential oils have been used for centuries as natural remedies for various ailments, including sleep disorders and stress-related conditions. Research has shown that certain essential oils, such as lavender and chamomile, have calming properties that can help promote better sleep and induce feelings of relaxation.

The soothing aroma of essential oils can help reduce anxiety and stress, allowing individuals to unwind and prepare for a good night’s sleep. By inhaling or applying essential oils topically, the active compounds in these oils can interact with the brain’s limbic system, which is responsible for emotions and memory. This interaction can lead to improved focus and concentration during the day, as well as a more restful sleep at night.

Whether diffused in the air, added to a bath, or used in massage therapy, essential oils can create a serene environment that promotes relaxation and reduces stress levels. Incorporating essential oils into a daily routine can provide a natural and effective way to achieve improved sleep and relaxation, leading to a greater sense of well-being and freedom from sleep-related issues.

Enhanced Mood and Emotional Well-being

The application of specific aromatic extracts has been shown to positively influence an individual’s emotional state and overall sense of well-being. Essential oils have long been used for their therapeutic properties, and recent research has shed light on their ability to enhance mood and promote emotional well-being.

When used in aromatherapy or applied topically, certain essential oils can help manage stress and promote relaxation, leading to a more balanced emotional state. For example, lavender oil has been found to reduce anxiety and improve sleep quality, while bergamot oil has been shown to elevate mood and reduce feelings of sadness and fatigue.

Additionally, essential oils like peppermint and rosemary can enhance mental clarity and focus, aiding in cognitive function and productivity. Incorporating these natural remedies into daily routines can provide a holistic approach to emotional well-being and stress management.

Boosted Immune System and Overall Health

Research has shown that the application of specific aromatic extracts can have a positive impact on an individual’s immune system and overall health. Essential oils, derived from plants, contain natural compounds that possess powerful immune-boosting properties. These oils, when used appropriately, can provide immune system support and promote natural healing.

Certain essential oils, such as tea tree oil, oregano oil, and eucalyptus oil, have been found to possess antimicrobial and antiviral properties. These properties can help strengthen the immune system by combating harmful pathogens and preventing infections. Additionally, essential oils like lavender and frankincense have been shown to possess anti-inflammatory properties, which can further support the immune system by reducing inflammation and promoting overall health.

Furthermore, the inhalation of essential oils through methods like diffusing or steam inhalation can also have a positive impact on the immune system. Inhalation allows the aromatic compounds to enter the respiratory system, where they can exert their immune-boosting effects.

Effective Pain Relief and Management

Pain relief and management can be effectively achieved through the use of certain aromatic extracts known for their analgesic properties. Essential oils, derived from plants, provide a drug-free alternative for individuals seeking natural healing methods. These oils contain active compounds that have been scientifically proven to alleviate pain and inflammation.

For example, lavender essential oil has been shown to possess analgesic properties and can effectively reduce pain associated with headaches and migraines. Peppermint oil, on the other hand, has been found to have a cooling effect that can soothe muscle aches and joint pain. Eucalyptus oil is known for its anti-inflammatory properties, making it a popular choice for pain relief in conditions such as arthritis.

Skincare and Haircare Benefits

Aromatic extracts derived from plants offer natural solutions for skincare and haircare needs, providing a gentle and effective way to enhance the health and appearance of the skin and hair.

Essential oils have gained popularity in recent years for their numerous benefits in beauty routines. For skincare, essential oils can be used in various ways, such as in hydrating face masks. These masks help to moisturize and nourish the skin, leaving it soft, supple, and glowing.

Additionally, natural hair serums containing essential oils can help improve hair health and manage common hair problems such as dryness, frizz, and breakage. The oils penetrate deep into the hair shaft, providing hydration, protection, and promoting hair growth.

With their natural properties and versatility, essential oils are a valuable addition to any skincare and haircare routine.

Conclusion

In conclusion, essential oils offer a range of benefits for sleep, mood, immune system, pain relief, and skincare.

Scientific evidence supports their effectiveness in promoting relaxation, improving emotional well-being, boosting overall health, and providing pain relief.

Additionally, these oils have been found to have positive effects on the skin and hair.

Incorporating essential oils into one’s routine can contribute to overall well-being and enhance various aspects of physical and mental health.

What Is Reflexology?

What Is Reflexology?

Reflexology is a form of manual therapy that involves applying pressure to certain

points on the feet and hands. These “body maps” are said to correspond with

different parts of the body and to help regulate organ function spa Kuala Lumpur. It is a treatment that

may be helpful for those suffering from various conditions, including pain, stress and

anxiety. However, many reflexologists caution that more research is needed into its

effectiveness as a healing modality.

:max_bytes(150000):strip_icc()/GettyImages-1372666434-c8959bc5e6a342f397cced51359730e5.jpg)

Reflexologists believe that the feet and hands are mirror images of every part and

organ in the body premium massage Spa. They say that by applying pressure in a specific pattern to these

critical areas, the nervous system is stimulated and the body is encouraged to heal

itself. In practice, the therapist works to relieve patterns of tension and help restore

balance to the entire body. In addition to the traditional foot massage, some

reflexologists offer hand and ear reflexology as well.

In a reflexology session, the client remains fully clothed and sits in a massage chair

or lies on a table. During this time, the practitioner will ask questions about health

history and lifestyle to determine where to work on the feet or hands. Reflexologists

will also check for open wounds, rashes, bunions, or other conditions that would

hinder the treatment.

During the reflexology session, the practitioner will apply gentle pressure to the feet

and may work on specific points or zones on the soles of the feet. These areas are

believed to correspond to the brain, lungs, liver and heart, among other vital organs

in the body. The therapist will also massage the feet to relax the muscles and

encourage blood flow.

Some people claim that reflexology sessions help ease back pain, headaches and

menstrual symptoms as well as improve digestion and boost immune function. Other

claims include restoring hormonal balance, boosting fertility and reducing the effects

of cancer treatments, such as nerve damage.

It is important for anyone considering a reflexology session to seek out a licensed,

professional reflexologist. There are several groups that offer training in reflexology,

and the Reflexologists Association of America has established a set of standards that

must be met to become a certified reflexologist. These include at least 300 hours of

foot and hand reflexology education, 160 of which must be in a live classroom

setting with an instructor.

In addition, the practitioner must pass a written exam and be an RAA member to

provide reflexology services. Anyone who provides reflexology without meeting

these requirements is practicing unlicensed massage. It is also a good idea to talk to

a healthcare provider before receiving this type of massage, as it can cause adverse

reactions in some people. This is especially true if you have circulatory problems,

blood clots, gout, thyroid issues or athlete’s foot. In addition, pregnant women

should only receive reflexology under the guidance of a licensed practitioner and

only after a doctor’s approval. However, for most people, a reflexology session is

generally safe and can be extremely relaxing.

The Pros and Cons of Online Dating

The Pros and Cons of Online Dating

For many people looking for love, online dating can seem like the best way to find a

potential partner. But it’s not without its risks and downsides. In fact, a number of

experts have found that online dating can be more harmful than beneficial.

Some of the most common disadvantages include negative experiences, the risk of

misrepresentation, and a lack of social skills. For example sugarbaby malaysia, a lot of people get into

the habit of judging others based on their profile photos. This can lead to people

making assumptions about their dates before meeting them, which can be

dangerous. Moreover, the lack of physical contact and conversation can cause

problems. It’s also important to remember that people who meet online can often be

very different from those they meet in person.

While these disadvantages may be true, there are ways to overcome them. For

instance sugar baby service kl, one can always choose not to respond to someone who doesn’t seem right

for them. And they can try to develop more social skills by being more active in their

communities and by getting out more.

However, if you’re serious about finding love, it’s important to be aware of the

potential dangers and take steps to avoid them. This is why it’s crucial to be

selective about who you date and only give out your contact information to those

who you think are trustworthy.

Another issue is that it’s easy for people to hide parts of their personalities online.

For instance, someone may claim to be a certain age when they’re really much

older. It’s also possible for them to lie about their job or other aspects of their life.

This can be particularly problematic if you’re in a long-distance relationship.

This is also a big problem with dating apps, since people can hide their phone

number or even change their name. This can make it very difficult to get in touch

with someone if things go south. Additionally, people who use dating apps can

“ghost” their dates without giving any reason. This can be incredibly heartbreaking

for those who have been seriously dating someone for a while.

It’s also important to keep in mind that online dating can be expensive. For example,

it’s not uncommon for people to spend a lot of money on a single date, only to

discover that the person they’re dating isn’t who they said they were. This can be

especially frustrating for women who are more likely to feel betrayed by this kind of

behavior. In addition, some apps have been known to promote a “hookup culture”

where women pay for men’s drinks and other expenses. This can be very damaging

to women’s self-esteem.

Gambling Casino Games

Gambling Casino Games

While some people think gambling is wrong, there are many legitimate legal applications for

casino gambling. Some of these gambling casino games are Craps, Video poker, Sic Bo, and

Sports betting. These games can be very profitable if you learn how to play them properly and

choose the correct game for your own style india online casino. Read on to learn more about gambling casino

games. This article provides basic information about the four most common games. Read on to

discover why they are so popular and how to get started playing them.

Sports betting

The appeal of sports betting has been proven by countless people who watch games online and

at local casinos. This exciting activity provides an added level of fun for fans who can predict the

result of a match. In contrast to casino games, which lack passion and excitement casino online best bonus, sports betting

is a fun and exciting way to watch games and win cash. In addition to offering increased

suspense, humour, and excitement, sports betting provides an opportunity to make money in a

safe and legal manner.

Video poker

The game of video poker has many variations. Some variations are more profitable than others,

but the basic principles are the same. Video poker is based on the poker hand rankings, though

some variations offer different hand rankings. The rules of each game affect the probability of a

particular hand occurring, so the odds of a royal flush are different depending on whether you’re

dealt one or five cards or more. The hand rankings are described in the table below.

Craps

Players make bets on dice in the casino game of Craps by placing them on the layout. Players

are responsible for placing their wagers on the layout correctly, while they must follow the

instructions of the dealer. Most successful players focus their strategy on knowing which bets to

place and which ones to avoid. Most winning players will avoid betting on the pass line, come

out roll, field, and proposition bets. Players should leave the big 6 and 8 bets to the stickperson.

Sic Bo

In the classic form of Sic Bo gambling, players bet on the total of three dice rolled by the dealer.

The dice are placed on a board and different betting options are listed. Players place a betting

token on one of these segments. A ‘Big’ bet, for example, hopes that the total will be between 11

and 17 points. Other wagers, such as ‘Pot’ bets, require a certain number of rolls before they

become a winning or losing bet.

Roulette

The simplest way to win at roulette is to bet on any single number, a group of numbers, or even

or odd. There are also several ways to bet on specific numbers, such as red or black. The odds

for these bets depend on the number of zeroes present on the roulette wheel. For instance, a

bet on one number would pay out PS1 if it came up twice.

Craps variations

Although the game of craps has been around for centuries, it continues to be a top favorite in

casinos. Throughout the centuries, various improvements and modifications have been made to

improve the game. Some of these improvements have evolved into completely new games,

known as variations. Craps variations in gambling casino games vary based on the locale of the

casino. The most popular versions of the game are played in live casinos, while variations

offered online are generally more casual and easy to learn.

Baccarat

If you want to play a game that is considered one of the oldest games in the world, baccarat

might be the one for you. The game is played on a large table, similar to that of craps, with three

dealers and up to 12 players. Unlike the online casino games, brick and mortar casinos don’t

offer this game on their websites. This is because modern game software developers developed

live baccarat versions that can be played in real time.

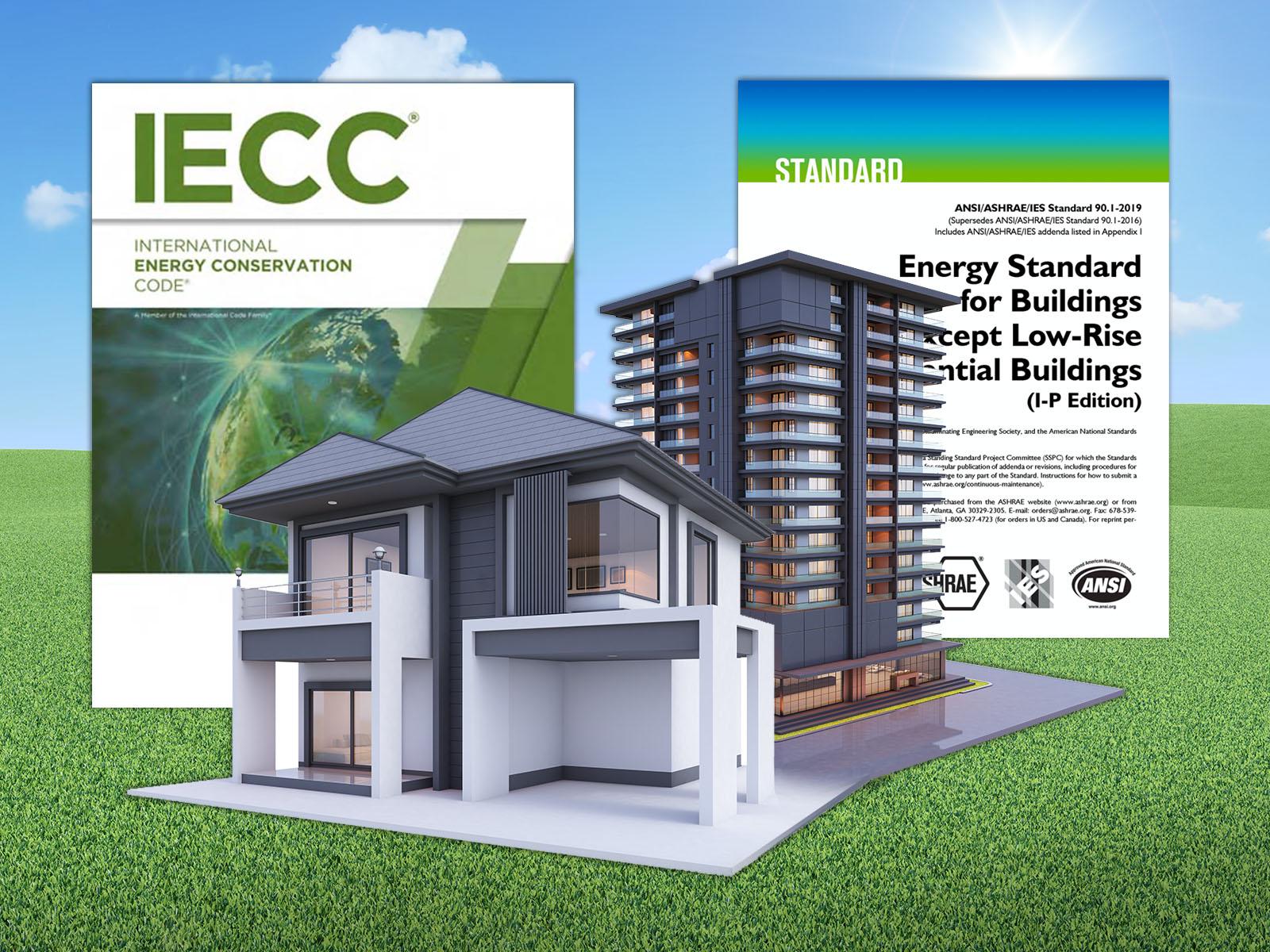

Energy Saving Building Codes

Energy Saving Building Codes

A mandatory energy-saving building code has been passed in Telangana and Andhra

Pradesh, which are now both states กระจกขุ่น. The new energy-saving code was adopted with

the help of the Natural Resources Defense Council (NRDC) and city and state

officials. In order to encourage energy-efficient construction, the two states teamed

up with the NRDC and Administrative Staff College of India. Energy-saving building

codes are an important part of the sustainable construction process and will be

adopted in both states after the bifurcation.

Although the results of conservation efforts are not easily discernible, substantial

progress has been made in the monitoring and assessment of these projects Chiefway Thailand.

Energy-saving building measures are becoming an accepted practice on both the

local and international level. The benefits for consumers and governments are

staggering, especially when heavy subsidies are included. In addition, the cost-

effectiveness analysis can be tailored to suit the prevailing prices and the extent of

governmental subsidies. With these benefits in mind, governmental programs are

starting to gain wider acceptance.

A key component of an energy-efficient building code is its stringency. New buildings

should be at least 20% more energy-efficient than a similar existing building. This

will allow cities to make significant energy-saving improvements without having to

rewrite the entire code. Consequently, they will benefit the economy and the

environment. If the energy-saving building code is adopted, it will result in an

increase in sales and profitability. It will also reduce carbon emissions from

buildings, which account for about 40% of all greenhouse gas emissions in the U.S.

and up to 75% in some cities.

The cost-effectiveness of energy-saving building improvements is crucial for

reducing energy bills. In fact, it can help to offset any initial capital cost increase.

Many energy-saving technologies are inexpensive, with the benefits far outweighing

the initial costs. These costs can be recovered over time as the energy savings

offset the increased capital cost. In addition, the capital costs of energy-saving

buildings are much lower than those of traditional buildings. The energy-saving

improvements are proven and effective.

Buildings should be refurbished as soon as possible if they are not yet certified as

energy-efficient. There are several factors that contribute to energy-saving building

regulations. One of the most important factors is building insulation. High-quality

insulation is essential for the building’s thermal comfort. This is crucial in many

buildings. As long as the building is not older than 15 years, energy saving measures

will improve its energy efficiency. A building can save up to 60% of its original

energy bill.

A study based on the main intentions of governments to reduce energy use in future

will help countries reduce their carbon footprint. The report includes two projections

for future energy demand based on international comparisons. The upper projection

indicates modest improvements in energy efficiency while the lower projection

shows the final energy consumption per dwelling. The study also uses the main

energy-saving policies of several countries. This makes energy-saving building a

necessity for the country and is an important part of the overall economic structure.

How to Use Skincare Tools

How to Use Skincare Tools

If you’ve ever wondered how to use skincare tools, you’re not alone. Many people are confused

by how to use facial cleansing brushes face lifting machine, derma rollers, and gua sha stones, but these products

all work differently. In this article, we’ll explain the difference between these tools and their

benefits, and give you some tips to help you use them properly. This article was written by

Joanna Vargas, a celebrity facialist, author, and founder of her own skin care line.

Regardless of the tool you choose, it is essential to know your skin’s condition before you shop.

While skin care products have always been popular, at-home skincare tools have become

increasingly popular as well https://beautyfoomall.com/collections/beauty-device. You can follow your favorite celebrity’s skin care routine to get

similar results. Use the tips and tricks outlined here to find the right tools for your skin type.

Then, start using them! Once you’ve mastered the basics, you’ll find that using skincare tools is

as simple as using them!

Despite the ease of use, skincare tools can be confusing. Using the right tool can bring

dermatologist-quality treatments to your home. Using a vibrating facial tool, for example, can

tighten skin in 10 minutes. This device was recently awarded the 2021 Cosmo Beauty Award. It

also stimulates facial muscles and works to unclog pores. With all of the benefits of these tools,

you’ll have a clear complexion and feel more confident!

When choosing a tool for at-home skin care, you should consider its function. Most facial rollers

are useful for massage, but there are also other types that can be used to improve circulation.

The gua sha tool is used by Chinese medicine practitioners. It uses the heat from a hot stone on

the skin to release tension in the face. The gua sha tool should be used once a week. Face

rollers are one of the simplest tools, and are made of jade or rose quartz.

An ice roller is another type of facial roller. Unlike other types of facial rollers, the ice roller is

stored in the freezer, reducing inflammation and redness. It can also contour your face in less

time than a regular facial roller. After use, be sure to clean the roller thoroughly and store it

somewhere away from food. If you’re new to using facial rollers, you should try one of these

products!

Jade rollers have been used for centuries. These tools date back to seventh century China and

have become ubiquitous in the beauty world. They help promote circulation by massaging facial

muscles and tissues. The jade roller is particularly effective for massaging moisturizers and

serums. The jade roller also comes in dual-sided versions, which have a small side specifically

for eye area use. These tools are great for targeting the problem area of your face and reducing

puffiness.

The Benefits of a Home CCTV System

The Benefits of a Home CCTV System

Installing a home CCTV camera is an excellent way to keep an eye on visitors to your home. It

can help you keep an eye on your elderly relatives cctv singapore, or monitor your pets while you’re away.

Whether you’re out of town or on vacation, it is easier to protect your family with a security

system. You can see who’s at the door with a home CCTV camera. And, if you’re out of town,

you’ll never be alone again.

The main benefit of a CCTV is its security. It is often used as a visual deterrent to potential

intruders. You can install a home CCTV outside or indoors surveillance camera singapore. A modern one records video even

when you’re not there. Digital cameras record up to a month’s worth of footage. Some models

also have motion-activated recording capabilities that give you access to the footage even while

you’re away.

Although the act of capturing images from a CCTV system is not itself a breach of data

protection laws, it is important to comply with them and respect the rights of those whose images

are captured. A CCTV can be mounted on a wall or attached to a doorbell. In all cases, you must

abide by data protection laws. For example, when installing a home CCTV system, it is important

to consider privacy laws and data protection.

A home CCTV camera can enhance security by preventing potential intruders from entering your

home. A CCTV can be set up to record video inside and outside of the home, even when you

are away from the property. A high-quality model can record up to a month’s worth of video. In

addition, a high-quality model will allow you to view the images on a computer or tablet if

needed.

While the costs of a home CCTV system may seem high, the benefits are many. These cameras

can help protect your home and perimeter. A good home CCTV will deter intruders by offering a

visual deterrent to their victims. This type of security system will also allow you to record video

even when you are away. A high-quality camera can record up to a month of video. Moreover, a

good one will also enable you to monitor intruders with a remote app.

A home CCTV system can also enhance the security of your home. It can provide visual

deterrence to potential intruders and keep your home safe. Besides, it can be set up for indoor

and outdoor areas. By using a CCTV system, you can watch the video when you’re not at home.

Moreover, a good camera will record video even when you’re not at home. And, it will record the

video for a month or more.

WHAT IS SEO & WHY IS IT IMPORTANT

WHAT IS SEO & WHY IS IT IMPORTANT?

You have probably heard that 100 times that Lookup Engine Optimization (SEO) is still an essential digital marketing tool, however even in case you’ve got a simple comprehension of exactly what it entails, you could not have a good grasp of this multifaceted and complex creature.

SEO Is composed of multiple diverse facets, and knowing exactly what they truly are and how they work is essential to understand the importance of SEO. In summary, SEO is significant since it creates your website more observable, so more traffic and much more chances to convert prospects to clients.

The Important Aspects of SEO

The Important Aspects of SEO

Long Gone would be the times when keywords were the sole SEO technique that amuses, but this does not mean that they are not nevertheless vital. The distinction is that now, keywords need to be properly used, carefully chosen, and judiciously utilized on your articles to work. However, exactly what exactly are keywords? Advertising company malaysia Keywords are words and phrases which prospects use to locate online articles, and also that brands may subsequently utilize to associate with prospects that are on the lookout for their services and products.

When Reading keywords, you must search for people who have top search speeds and very low rivalry and also to decide on short-tail keywords (for instance, dog), long-tail keywords (for instance, terrier dogs available ), along with local keywords (for instance, dogs for sale at Boston) to enter your content. You might even use keywords to maximize each of your names, URLs, along with other search-engine importance of SEO and elements (more on this later).

Content

Content is an essential part of all SEO because Oahu is the automobile you utilize to accomplish and engage crowds. As an example, if you possessed a toddler and wished to raise your visibility, then you may publish a collection of Onesearchpro digital marketing blogs about gardening, then deciding on the ideal species of plants, growing tips, and much more. If a man or woman who would like to learn more about gardening traveled searching for this particular advice, your weblog would develop, and you would certainly have the ability to make a relationship with this prospect by providing invaluable details. Once the time came for this possibility to purchase a plant, for example, you would certainly be the very initial nursery that came into mind. Now’s content has to be enlightening, but also interesting, important, engaging, and shareable. Content comes in various kinds, such as:

- Web Site articles

- Videos

- Blogs

- InfoGraphics

- Podcasts

- White-papers along with E-Books

- Social media articles

- Local Listings

- OffPage SEO

OffPage SEO involves topical marketing methods that happen from the website as opposed to onto it. The most important technique useful for OffPage SEO is backlink construction since quality links from outside internet web sites tell search engines your website is high quality and value, and this also builds ability.

There Are many approaches to backlink construction, and a few of the present best techniques include guest blogging, so creating a lot of info-graphics which are going to be tremendously shared, along with also mentioning influencers on your content.

Here Is All About Website Designing

web design Malaysia is a multi-step process that begins with visualizing and planning and later moves on to implementing the planned structure using certain tools. These tools may be some website building coding languages like HTML, CSS, and JavaScript and frameworks like Node, Angular, React, and Bootstrap, etc., to maybe a website builder platforms like WordPress, Wix, and many more.

How to design a website (Step by step)?

- Select a domain name: At first, figure out why you need a website, whether it has to be an e-commerce website or a website for your salon. Then, choose the domain name according to that. Also, ensure that the domain name is unique.

- Get a web hosting: Once you have your unique domain name, all you have to do before building a website is to find a place to host your website. To host your website, you will have to pay to ensure you have a safe and smooth running website.

- Find a website builder: The easiest way to build a website is by using a website builder. It has various plug-INS you can use according to how you plan your website to be. You can have a particular color theme, different navigation bars, and many more things to do. You can also hire a software developer Malaysia to build a website for you.

- Add content: When you’re done with finding your theme and suitable plug-INS, you’re good to go and add your content however you like.

- Launching: After all the steps are correctly and carefully done, your website is ready to be launched and start getting some engagement and start growing.

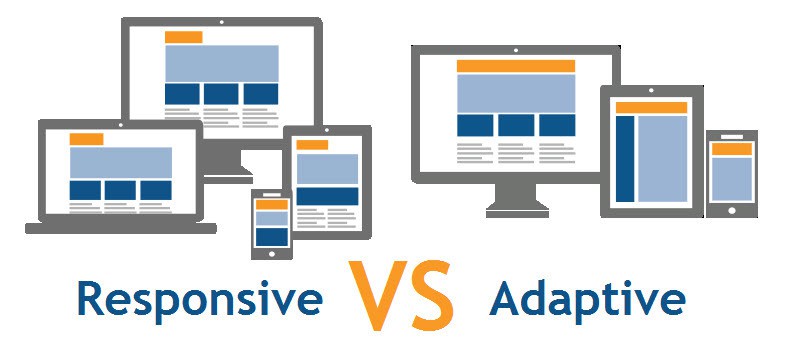

You must have used the same websites on different devices like your laptop or computer, tablet, or mobile phone. Have you ever wondered how you can use this website on all devices so smoothly? These websites are adaptive or responsive to all the devices, making them compatible with all the devices.

So, there are two types of websites, adaptive and responsive, which you have to consider while designing for yourself.

Adaptive websites use at least two versions of a website designed to be compatible with a particular type of device. It either adapts based on the type of device or the basis of the browser width. For a particular type of device, when someone tries to open the website, HTTP’s request field informs the server about the type of device trying to access the website. On the other hand, for browser width, different widths, also called breakpoints, are preset, which makes the website adapt to the browser’s size.

A responsive website uses the same breakpoints in the form of media query tags combined with responsive grids to create a customized size for each device. They continuously change with the change in the size of the browser.

Considering all the available options for building an amazing website and knowing about the types of a website according to your comfort and use, all you need to do is follow the steps and start designing your website.

Why multi-touch Tech may be the ideal match for the office

Why multi-touch Tech may be the ideal match for the office,?

Nearly Every production employees are knowledgeable about all the multi-touch technological innovations for industrial automation. We create use of it in our daily lives. For example, tablets or smartphones. Tasks such as checking emails to get a job, obtaining corporate info are complete readily with mobiles alternatively of some type of laptop or laptop method. Such a Human Machine Interface (HMI) is attracting industrial automation through the use of multi-touch solutions in the office, Within a commercial scale, the tech offers lots of advantages to provide such for example for instance relatively cheap hardware, also its capability to work and access information fast and readily, along with near-universal familiarity using this tech. And also the use of both HMI methods with all the tech provides exceptional advantages besides the people mentioned previously.

The best way Multi-touch technological innovation differs from an ordinary touchscreen?

The best way Multi-touch technological innovation differs from an ordinary touchscreen?

It is quite widespread to become puzzled involving multi-touch and only touchscreen. A lot of men and women believe these to the same because of the deficiency of suitable info. However, an ordinary touchscreen app simply uses only touches for obtaining multiple screens. It ostensibly simplifies hardware such as a computer keyboard and a pointing device like a mouse. Even though, on the flip side, a smart Touch multi touch screen provides some additional benefits over traditional touchscreens.

Exactly why Multi-touch technological innovation is perfect for both Industrial Automation?

- Low Implementation Charges:

Malaysia AV Discovery Technology is bombarded with lots of brand fresh engineering. Several of those technologies can easily be flexible although some are not. A few demand a paradigm change or significant investment which only outweighs the advantages of the new technology. But multi-touch for HMI can be just a tech that provides many authentic gains minus a demand for important expense decisions or transforming the existent labor techniques. For example, the coming of programs such as Windows 7 functioning platform using built-in multi-touch programming skills has now simplified using this technology throughout tablets and smartphones. It will not call for any pricey expense in components.

- Slimming Education Time and Prices:

Elderly Operators Re-Tire at larger rates and also younger types arrive at a beginner stage. First, they have to get trained before they could replace knowledgeable employees. Businesses naturally wish to decrease working out expenses and moment, however, they don’t recognize the most suitable approach to do it. Utilizing multi-touch solutions in office innovation may end up being beneficial whilst the vast better part of those youthful workers have countless a lot of knowledge with tablets and smartphones also thus, they’ll soon be harmonious using multi-touch gestures for both HMI techniques. This procedure is instinctive and could certainly be heard.

- Inherently Fitted to Industrial Ailments:

Devices Getting the most of mouse and keyboards which can be exposed to pollution in dust And water can not fit with the multi-touch screen style and style and style. It’s no moving components and Thus it’s a greater suit. It finally enhances the entire life length of this Gear. Off-the-shelf apparatus May Be Used from the area without needing any Additional protective steps. In Any Case, It’s a less costly alternative as In contrast to computer keyboards and switching devices that charge a lot of funds while Rescue them into hazardous areas like Zone 1 ) or 2 two. Multi-touch HMI screens Feature a protective overlay of freshwater or glass that protects them Out of splashes, filth, and serious temperatures.